A Look Under the Hood: How Phones Tell Us What Users Are Doing

Introduction

Most apps react to what users tap.

We’re interested in something that happens before the tap.

Every smartphone constantly senses motion, orientation and rotation. Not in a creepy way - just physics. And when you look closely, these raw signals tell a surprisingly rich story about what’s happening in a user’s real world.

At ContextSDK, we’ve learned how to decode these signals. By interpreting on-device motion and orientation data in real time, we can understand how a phone is being used in a given moment - whether someone is stationary or moving, focused or distracted, settled in or just passing through.

This is the foundation of context-aware decisioning: not guessing intent from past behavior, but understanding the current situation a user is in, right now.

Four Sensors. One Question: “What’s Happening Right Now?”

Modern iPhones expose four motion-related sensors in real time:

- Accelerometer - detects changes in speed across three axes

- Gyroscope - measures rotational force

- Gravity - tells us how the phone is positioned in 3D space

- Attitude - describes device orientation relative to a reference point

Individually, these signals look noisy and abstract. Together, they start to describe very human situations:

- Is the phone lying flat or held upright?

- Is it moving smoothly or erratically?

- Is the user stationary, walking, or rotating the device?

To explore this, we built a small internal app that records raw sensor data while performing simple, controlled movements.

Then we did something very low-tech:

We filmed the movements and plotted the sensor data next to them.

Suddenly, the data made sense.

Making sense of these signals doesn’t start in dashboards. It starts with observing how phones behave in the real world - how they move, rest, tilt and rotate in everyday situations.

And this is exactly what we did.

What the Data Reveals (Without Knowing Anything Personal)

Here’s the important part:

None of this data contains identity, content or intent. It’s just motion and physics.

But even so, patterns emerge.

Accelerometer + Gyro: Movement and Rotation

These sensors are excellent at detecting change.

- Walking vs. sitting

- Smooth motion vs. jittery movement

- Subtle rotations vs. deliberate interaction

They form the backbone of how we already detect activity states today.

When the phone moves vertically, the accelerometer’s Z-axis shows clear, repeatable patterns. Paired with gyroscope data, this helps distinguish deliberate interaction from passive motion - even in very short time windows.

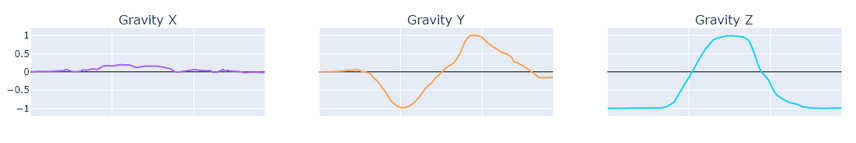

Gravity: The Missing Piece

Gravity turned out to be especially interesting.

Unlike acceleration, gravity gives a stable reference for how the phone is positioned in 3D space. Face-up. Face-down. Upright. Tilted.

This adds a layer of clarity:

- A stationary phone on a table feels very different from one held in hand

- Two users can be “inactive” in analytics but in totally different physical states

Adding gravity data significantly improves how confidently we can classify moments.

Why Some Sensors Are Harder Than They Look

Not every signal is equally useful.

The attitude sensor, for example, measures rotation relative to a reference point set at initialization. That makes it mathematically powerful, but practically tricky.

Without storing that reference point, absolute orientation becomes ambiguous. Small rotations look clean. Larger ones introduce jumps and discontinuities that are hard to use reliably in short, real-world sessions.

In other words: fascinating, but not ideal for production context detection.

That’s part of the work: knowing what not to use.

From Raw Signals to Real Moments

Raw sensor data arrives at ~100 data points per second, per axis. That’s far too granular to use directly.

The real challenge - and where the magic happens - is abstraction:

- Smoothing noise

- Summarizing short windows of motion

- Turning physics into stable, privacy-safe signals ML models can work with

This is how ContextSDK operates:

- All processing happens on-device

- No raw data leaves the phone

- Only high-level context signals are produced

Not “who” the user is.

Just what kind of moment this is.

Why We’re Sharing This

We don’t usually talk about internals. But understanding how context works at a physical level is core to how we think about product decisions.

Context isn’t guessed.

It’s sensed, simplified, and interpreted.

When apps understand the physical situation a user is in, they can:

- Wait when attention is low

- Act when receptivity is high

- Stop mistaking bad timing for bad UX

At the end of the day, context-aware systems don’t feel smarter because they know more about users.

They feel smarter because they respect the moment users are actually in.

And sometimes, that understanding starts with something very simple: a phone on a table, moving through the real world.